Cut Cross Entropy Deep Dive: 20x Memory reduction in LLM Pretraining through optimized triton kernels

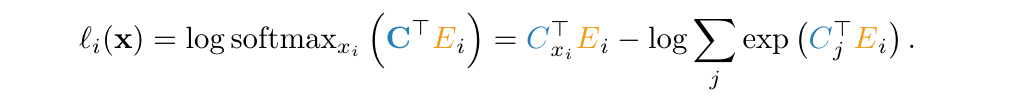

Introduction Whilst working on pretraining SabiYarn in 2025, I came across a really interesting paper by a team at Apple called “Cut Your Losses In Large-Vocabulary Language Models”, that had a very interesting proposition - the cross entropy loss function has had a memory problem that has quietly crept up with a trend in LLM development, Large Vocabulary sizes. The paper introduces an optimised triton kernel for computing the cross entropy, called Cut Cross Entropy. Taking the Gemma 2 (2B) model as an example, CCE reduces the memory footprint of the cross entropy loss computation during training from 24 GB to 1 MB, and the total training-time memory consumption of the classifier head from 28 GB to 1 GB. ...